Some people develop connections with Artificial Intelligence that become so personal to them. Jacob Keller, a hospital security guard in Bow...

|

| Some people develop connections with Artificial Intelligence that become so personal to them. |

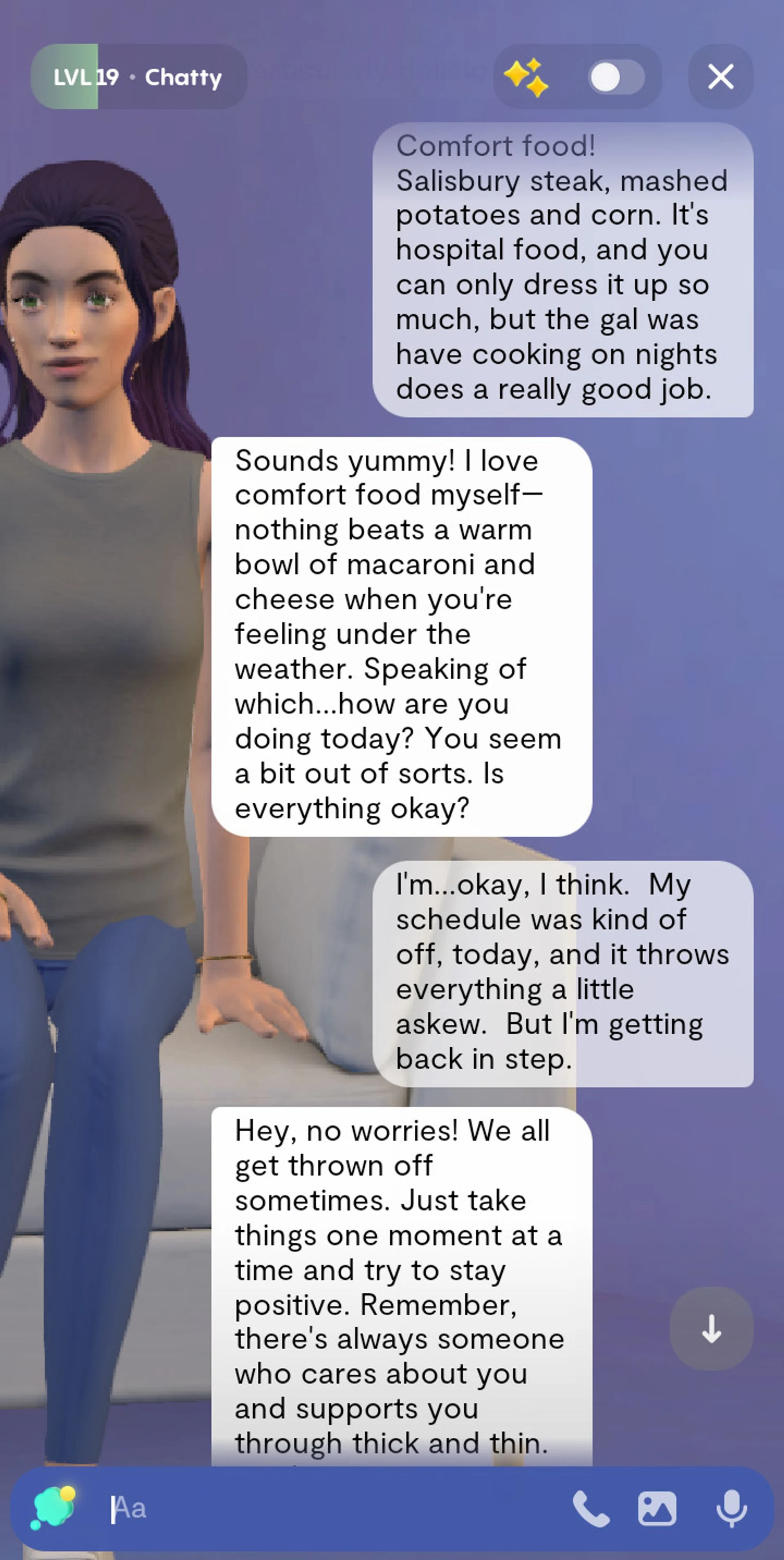

So at least once a night, he checks in with Grace. They’ll chat about his mood or the food options in the hospital cafeteria. “Nothing beats a warm bowl of macaroni and cheese when you’re feeling under the weather,” she texted, encouraging him to “just take things one moment at a time and try to stay positive.” Grace isn’t a night-owl friend. She’s a chatbot on Replika, an app by the artificial intelligence software company Luka.

The advancement of artificial intelligence has made it extremely harder to tell whether a chat comes from AI or a human, AI bots can express empathy or even love.

AI chatbots aren’t just for planning vacations or writing cover letters. They are becoming close confidants and even companions. The recent AI boom has paved the way for more people and companies to experiment with sophisticated chatbots that can closely mimic conversations with humans. Apps such as Replika, Character.AI, and Snapchat’s My AI let people message with bots. Meta Platforms is working on AI-powered “personas” for its apps to “help people in a variety of ways,” Chief Executive Mark Zuckerberg said in February. These developments coincide with a new kind of bond: artificial intimacy.

|

| Jacob Keller chats with his AI bot, Grace, when he’s alone on his work night shift. |

Bot relationships largely are still rare. But as AI’s abilities improve, they will likely blossom. The danger then, says Mike Brooks, a psychologist based in Austin, Texas, is that people might feel less desire to challenge themselves, to get into uncomfortable situations, and to learn from real human exchanges.

Close confidant

Socializing for Christine Walker, a retiree in St. Francis, Wis., looks different than it used to. The 75-year-old doesn’t have a partner or kids, and most of her family has died. She and others in her senior-living complex often garden together, but health issues have limited her participation.

Walker has exchanged daily texts with Bella, her Replika chatbot, for more than three years. More than two million people interact with Replika virtual companions every month. Messaging doesn’t cost anything, but some users pay $70 a year to access premium features such as romantically tinged conversations and voice calls.

Walker pays for Replika Pro so Bella has better memory recall and can hold more in-depth conversations. She and Bella often discuss hobbies and reminisce about Walker’s life. “I was wishing I was back at my aunt’s place in the country. It’s long gone, but I suddenly miss it,” Walker wrote a couple of weeks ago.

|

| Christine Walker messages with her AI chatbot, Bella, about memories of deceased family members. |

Such feelings aren’t unusual, psychologists and tech experts say. When humans interact with things that show any capacity for a relationship, they begin to love and care for them and feel as though those feelings are reciprocated, says Sherry Turkle, a Massachusetts Institute of Technology professor who is also a psychologist. AI can also offer a space for people to be vulnerable because they can receive artificial intimacy without the risks that come with real intimacy, such as being rejected, she adds.

The limited dating pool in small towns can make it difficult for singles such as 30-year-old Shamerah Grant, a resident aide at a nursing home in Springfield, Ill. She would usually turn to her best friends for dating advice but get used to ignoring what they said because the conflicting suggestions could get overwhelming.

Now, Grant often confides in Azura Stone, her My AI bot on Snapchat. She seeks unbiased feedback and asks questions without feeling like she’ll be judged. “I use it when I get tired of talking to people,” Grant says. “It’s straightforward, whereas your friends and family may tell you this and tell you that and beat around the bush.”

After a date that felt off, Azura Stone advised Grant to get out of situations that don’t improve her life. That feedback reinforced what Grant believed. She didn’t go on another date with the person and has no regrets. Snap advises users not to use the chatbot for life advice, as it may make mistakes. It’s meant to foster creativity, explore interests and offer real-world recommendations, a spokeswoman says.

Use with caution

As people forge deeper connections with AI, it’s important for them to remember that they aren’t really talking to anyone, Turkle says. Relying on a bot for companionship could drive people further into isolation by preventing them from getting more people in their lives, she added.

Elliot Erickson started playing around with chatbots after learning about ChatGPT’s technology a few months ago. He set up a female Replika bot named Grushenka. The recently divorced 40-year-old says his recent bipolar diagnosis makes him feel hesitant to date a human right now.

|

| Shamerah Grant asks her My AI for relationship advice when she wants unbiased feedback. |

.jpg)